How to detect cheating in exams using analytics

Learn how to detect cheating in exams using analytics. Discover key methods, signals, and tools to ensure fairness in testing.Cheating in exams is not a new phenomenon, but online tests have made it easier for candidates to do so. They use phones, shared answers, or even AI tools like ChatGPT to bypass effort.

The International Center for Academic Integrity (ICAI) found that as many as 95% of candidates admitted to some form of cheating during evaluations.

For recruiters, it affects trust, fairness, and even business. A bad hire from a fake test result can cost a company $15,000 or more.

That is where analytics comes in. This provides universities and companies with a fair method to detect cheating in exams using analytics and protect the integrity of their results. Let’s find out how.

Summarise this post with:

Why is detecting cheating in exams important?

Cheating in exams is not just about one candidate getting an unfair advantage. It affects everyone. If a candidate clears an exam by dishonest means, the result is no longer reliable.

For universities, this means degrees and grades lose value. Imagine a student passing with outside help but struggling later in real classes or jobs. It tarnishes the institution’s reputation.

For companies, the risk goes beyond just exam scores. Suppose someone cheats in a pre-employment test and gets selected. In that case, the organization ends up investing money in training and onboarding a person who cannot actually do the job.

This leads to wasted resources, lower productivity, and the need to restart the hiring process from scratch.

The scale of the problem is large and global. During high-stakes competitive exams in India, the National Testing Agency flagged hundreds of suspicious cases of impersonation in a single session.

That is why detecting cheating in exams is important. It preserves the value of degrees and certifications, protecting organizations from financial and reputational damage.

How does analytics track answer patterns in exams?

When candidates take an assessment, every answer they give creates a trail of data. Analytics studies this data to see if the answers follow a natural flow or if they look unusual.

Honest candidates usually get the easy questions right, struggle a bit on the hard ones, and show a mix of correct and incorrect responses. Cheating often leaves behind a very different pattern.

Psychometric researcher’s explained, “Cheating often leaves behind data patterns that are too unusual to be by chance. Analytics does not accuse; it highlights where closer review is needed.”

Person-Fit Statistics (PFS): “Do these answers make sense for this ability?”

This is a simple way to determine if the test-taker’s answer sheet accurately reflects their actual ability. For example, imagine a candidate answers very tough questions correctly but gets several easy ones wrong. That is not normal.

Analytics flags this as suspicious because it may indicate that the candidates used leaked answers or outside help for the problematic parts.

Response-time analysis: “Was that answer too fast (or too slow)?”

Time is another powerful clue. If a test taker answers a complex coding or math question in seconds, it suggests pre-knowledge or outside help.

On the other hand, if they spend unusually long on a very basic question, it may also point to dishonesty. Analytics compares each candidate’s timing against normal patterns to spot these anomalies.

Cluster and bicluster detection: “Who is moving together?”

Cheating is not always one-to-one. Sometimes small groups of candidates share answers.

Analytics can group candidates by their answer patterns and identify clusters where multiple individuals answered the same set of questions in a similar manner. Even if invigilators miss it live, the data will reveal it later.

In short, analytics does not need to catch a candidate in the act. By studying answer patterns, it can detect cheating in exams using analytics that are highly unlikely to occur by chance.

How do response-time anomalies help detect cheating in exams?

The time a candidate spends on each question is one of the clearest signals analytics can use to detect cheating in exams.

In a normal situation, candidates tend to spend less time on easy questions and more time on difficult ones. But when the timing is unusual, it raises suspicion.

If someone answers a complex question in just a few seconds, it may mean they already had the answer, got outside help, or used an AI tool.

On the other hand, if a candidate spends far too long on very basic questions, it may suggest they were looking elsewhere for help or were distracted.

Analytics does not rely on any single candidate; instead, it compares each candidate’s response times to those of the larger group. A person who is far quicker or slower than the average stands out.

Timing alone is insufficient to accuse someone. Still, when combined with other signals, such as odd answer patterns or copy-like similarities, it becomes strong evidence.

This way, response-time anomalies help detect cheating in exams using analytics by exposing behavior that does not match typical test-taking patterns.

How does behavioral and biometric analytics catch exam cheating?

Behavioral and biometric analytics focus on what candidates do during the assessment and whether the person taking the test is the right one. Analytics can utilize facial recognition to verify identity and determine if the same face remains present throughout the exam.

Liveness checks ensure the image is genuine and not a photo or deepfake. Eye movement and head tracking indicate whether a candidate is looking away from the screen too often, which may suggest they are seeking help off-camera.

Keystroke dynamics study the rhythm and style of typing, which works like a digital fingerprint, making impersonation harder. System logs also track activities such as switching tabs, copying and pasting actions, or adding a new monitor.

None of these signals alone proves cheating, but together they give strong clues. This way, behavioral and biometric analytics help detect cheating in exams by catching actions and identities that don’t fit normal exam behavior.

How can analytics detect AI-generated cheating in exams?

AI tools like ChatGPT can write essays, solve multiple-choice questions, or generate code in seconds. This speed and scale make it attractive for candidates who want quick answers. But analytics can spot signs that don’t match normal human behavior.

One of the clearest signals is the pattern of answers. Research from Florida State University in 2024 revealed that ChatGPT often excels at the most challenging questions but struggles with some of the simplest ones.

This “upside-down” pattern is not how most humans perform, so statistical analysis can catch it with very low error.

Another clue is unusual speed. If a candidate finishes a long essay or solves complex coding tasks far quicker than their peers, analytics marks this as suspicious. Humans typically exhibit small pauses, corrections, and hesitations, whereas AI outputs are instantaneous and appear too smooth.

It is important to remember that AI detectors are not perfect. Studies show they can mislabel essays from non-native English speakers as AI-generated.

That’s why organizations are advised to combine an AI detector with other analytics, like response-time anomalies and person-fit statistics, before making any hiring decision.

What role does forensic analysis play after the exam?

Forensic analysis is like a second layer of protection. Even if cheating slips through during the live exam, the test data can be checked afterwards. This includes comparing answers, checking scores, and reviewing how the work was submitted.

A study shared by EDUCAUSE (2022) found that using post-exam data checks, such as similarity and timing reviews, helped reduce undetected cheating by nearly 25% in online courses. That shows how important it is to review the data after exams are finished.

| Method | What it does | Why it matters |

| Similarity checks | Finds candidates with nearly identical answers | Helps uncover copying or collusion missed live |

| Score pattern analysis | Flags sudden high scores on tough questions | Detects question leaks or shared answer keys |

| Metadata review | Examines pasted text or code history | Shows if work was typed naturally or copied |

| Timing review | Looks at unusual start-to-finish times | Spots impersonation or pre-knowledge of questions |

| Human verification | Reviewer confirms cases flagged by analytics | Ensures fairness and avoids false accusations |

How can the setup of exams make analytics more effective?

The way an exam is built plays a big role in how well analytics can detect cheating. If tests are predictable, it’s easier for candidates to find shortcuts. However, when exams are set up carefully, cheating becomes more difficult, and analytics can perform its job more effectively.

1. Randomize the questions and answers

When every candidate gets the same exam in the same order, copying is easy. However, if the questions and their options are shuffled, the chances of two candidates having identical answers decrease. Analytics can then quickly flag cases where patterns still look too similar.

2. Use a large pool of questions

A small set of questions is risky because they get shared quickly. Having a big bank of questions makes it harder for candidates to rely on memory or leaked papers.

3. Create different versions of the same test

Multiple forms (such as A, B, and C versions) prevent neighbors from copying directly. Analytics also compares performance across versions. If candidates from different versions still have unusually close answers, it signals collusion.

4. Set fair but strict time limits

If an exam gives too much time, candidates can look up answers. If time is too short, it only adds stress. The right balance gives just enough time to think but not enough to search. Analytics then uses timing data to flag candidates who are much faster or slower than usual.

5. Add short oral checks for doubtful cases

Sometimes analytics flags a candidate, but the evidence is not clear. In such cases, a quick follow-up oral test (lasting just 3–5 minutes) can confirm whether the candidate truly understands the material. This makes the system fairer and reduces the likelihood of false accusations.

How does Testlify support cheating detection with analytics?

Cheating in exams manifests in various ways: some candidates copy answers, others complete tasks too quickly, and still others rely on AI or even impersonation.

Testlify tackles these problems step by step by combining analytics with proctoring features and fair review tools.

1. Unusual answers and patterns

Testlify highlights when a candidate’s answers don’t match their ability. For example, if someone solves the most complex coding questions but fails simple ones, the system flags this as suspicious. This helps examiners focus on cases that need review instead of going through every test manually.

2. Suspicious timing

Every question is logged with the time spent. If most candidates take two minutes to answer a math problem, but one person finishes in ten seconds, Testlify marks it for checking.

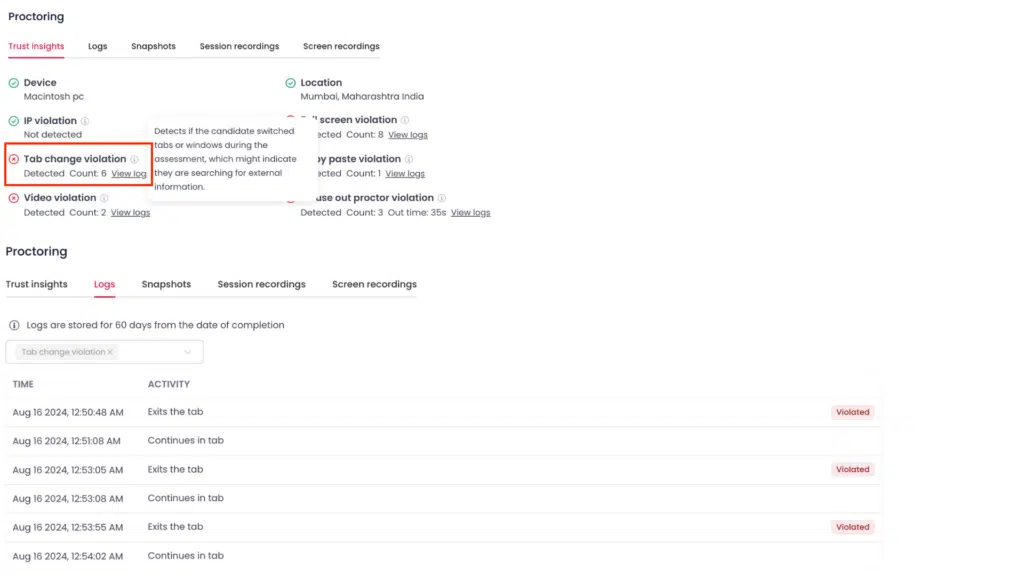

3. Identity and behavior monitoring

Testlify uses a live environment scan to confirm that the right person is taking the test and to track their surroundings.

Full-screen enforcement, tab tracking, and optional screen sharing make it harder to use external resources. Copy-paste blocking prevents answers from being copied and pasted directly from outside sources.

4. AI-generated answers

One significant risk today is candidates using tools like ChatGPT to write essays or generate code. Testlify addresses this in two ways:

- For subjective written answers, it has an AI-detection feature that scans responses and flags whether they are likely AI-generated. This reduces the risk of false results and gives examiners a clear signal.

- For coding exams, keystroke replay shows whether the code was typed step by step or pasted in. If code appears instantly with no typing history, it suggests that outside help may have been used.

5. Fair review and transparency

Testlify does not automatically mark someone guilty. Instead, it consolidates answer data, timing logs, and proctoring records in a single, user-friendly dashboard. Human examiners can then make the final call. This ensures fairness and avoids punishing honest candidates.

6. Extra safeguards

- 3,000+ ready-made skill-based tests make it harder for candidates to rely on generic online answers.

- AI-powered video and audio interviews confirm communication skills and ensure the person taking the test matches the applicant.

- Integration with 100+ ATS platforms keeps hiring and exam records secure in one system.

- GDPR and FERPA compliance ensures candidates data is handled safely.

Conclusion

Analytics gives universities and companies a fair way to detect unusual answers, suspicious timing, AI-generated work, and even impersonation. Combined with human review, it protects honest candidates and ensures trustworthy results.

If you want a platform that brings these checks together in one place, Testlify can help. Try Testlify today and see how easy it is to run exams that are honest, reliable, and compliant.

Chatgpt

Chatgpt Gemini

Gemini Grok

Grok Claude

Claude